Control AI Crawlers: The Essential Guide for Every Webmaster

Picture waking up to discover your months-long blog series powering someone else’s AI model—without your permission. If you’ve ever felt that sting—especially when SEO and brand integrity are on the line—here’s a high-impact tactic you can implement today. By adding a single authority file at the root of your domain, you seize full command over which large language models may crawl, index, or train on your pages. No convoluted scripts. No external services. Just crystal-clear directives that put you in the cockpit.

Why It Matters for SEO

In a landscape driven by AI, every word you publish is a strategic asset. Unchecked AI scraping doesn’t just pilfer your content—it trains competing models to churn out copy that mimics your expertise. By deploying llms.txt, you preserve your unique insights, safeguard proprietary media, and signal to search engines that you remain the original source—reinforcing your site’s authority across both human and machine audiences.

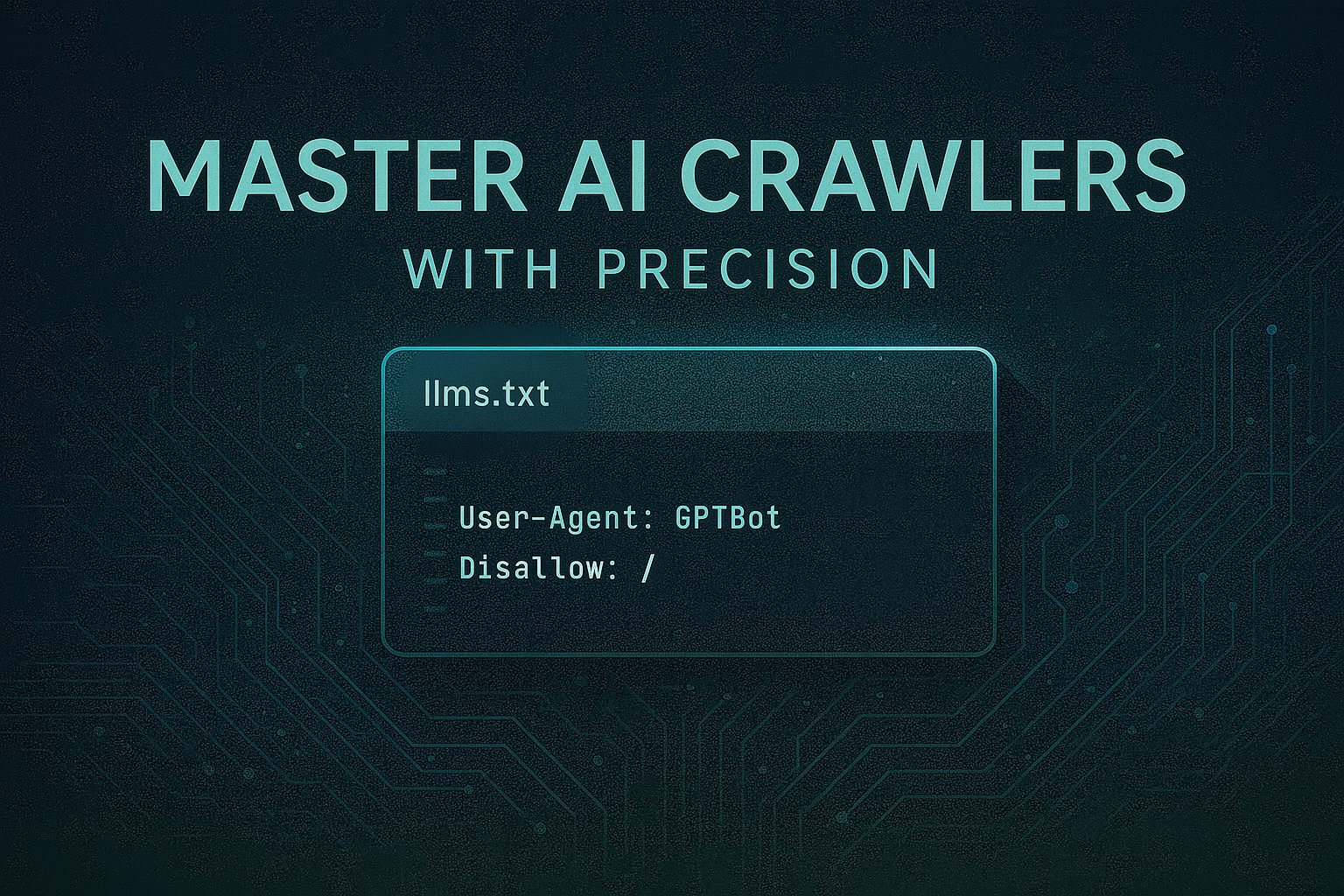

Meet llms.txt

Think of llms.txt as robots.txt’s AI-savvy sibling. LLMS stands for “Large Language Models,” and this simple text file communicates directly with AI-powered crawlers. When a bot like GPTBot or Google-Extended arrives, your llms.txt tells it whether your content is on the guest list—or barred from entry.

Key Benefits

-

Active Gatekeeping: Stop unwanted scraping dead in its tracks.

-

Transparent Oversight: See exactly which models can—or can’t—access your site.

-

Brand Protection: Keep your tone, style, and proprietary assets under lock and key.

How to Deploy llms.txt

Getting started couldn’t be simpler:

-

Create the File: In your site’s top-level directory, add a plain-text file named

llms.txt. -

List Your Rules: Specify the User-Agent directives you wish to enforce. For example:

User-Agent: GPTBot

Disallow: /

User-Agent: Google-Extended

Disallow: /

-

Upload & Activate: Once uploaded, compliant AI models immediately honor your policy.

WordPress Tip: Leading SEO plugins now include a native toggle for llms.txt. A couple of clicks in your plugin settings, and your authority file is live—no manual edits required.

Real-World Success

When Vercel implemented llms.txt as part of their AI content strategy, they reported that 10% of their new signups now come directly from ChatGPT, underscoring how effectively guiding language models can drive qualified traffic.

And according to Search Engine Land, early adopters are already integrating llms.txt across major publishing platforms—highlighting its rapid uptake and growing importance in AI-first content management.

Best Practices for Ongoing Control

-

Treat It Like Your Guest List: Every time a new AI tool emerges, ask yourself: “Do I want this bot at my party?” If not, add its name to your file.

-

Check In Regularly: Quarterly, run a free “

llms.txtvalidator” to confirm your rules are being enforced. -

Layer Your Defenses: Keep

robots.txtupdated alongsidellms.txt, and consider simple extras like rate limits or basic authentication on sensitive pages.

Think of llms.txt as your site’s personal bodyguard in an AI-dominated world—not just an optional add-on, but the frontline defender every SEO pro needs. Deploy it today, and you’ll move from quietly hoping bots play fair to confidently calling the shots—keeping your content and brand reputation secure as AI continues its breakneck evolution.

Ready to lock down your content? Create your llms.txt now, share your experience in the comments, and stay tuned for more insider tips and tricks to elevate your site’s security and SEO prowess.

Rami Zebian

CEO, LeLaboDigital